Back

Brian Baggett

Related Blogs

-

Aug 07, 2025

Aug 07, 2025What is a Cloud Management Platform?

A cloud management platform (CMP) is part of a larger cloud fabric orchestration strategy that typically helps enterprise IT control... -

Sep 15, 2022

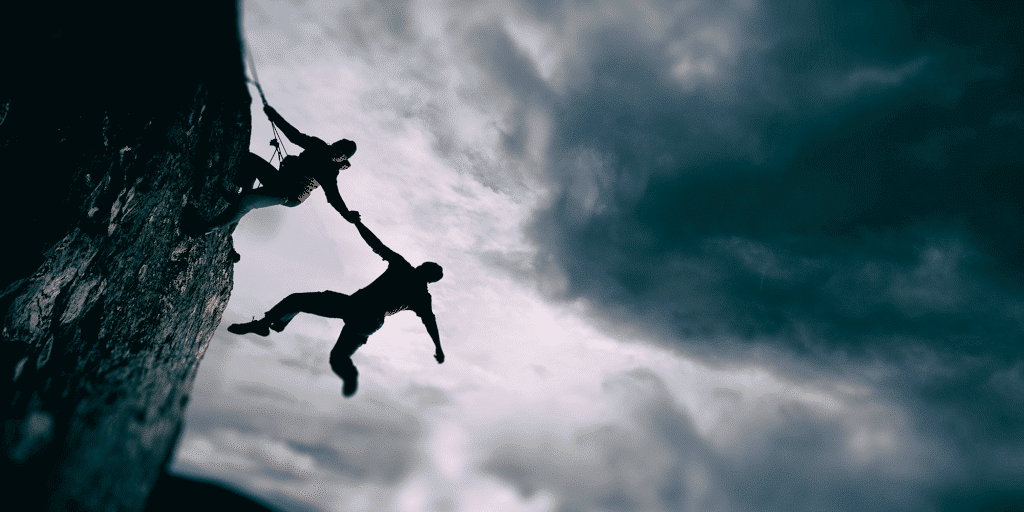

Sep 15, 2022Terraform & CloudBolt | Turning IT Admins into Heroes

On a typical Monday at CloudBolt we have a weekly meeting called “Chalk Talk” to discuss industry trends. Recently, Sam... -

Sep 17, 2019

Sep 17, 2019Kubernetes Gains Traction

Although Kubernetes has been around for quite some time, the open-source container orchestration platform has recently gained traction with public... -

Aug 27, 2019

Aug 27, 2019DevOps Tools for Success

Agile software development practices have emerged as the preferred way to deliver digital solutions to market. Instead of defined stages... -

Aug 13, 2019

Aug 13, 2019AWS Well-Architected Framework and CloudBolt

As enterprises and agencies continue to expand on cloud initiatives, there’s an increasing number of choices from public and private... -

Aug 06, 2019

Aug 06, 2019ServiceNow and CloudBolt Innovation—Want to Dance?

ServiceNow, as an enterprise Software-as-a-Service (SaaS) cloud offering for enterprises, delivers and manages just about everything. They lead the pack... -

Jul 09, 2019

Jul 09, 2019Jamf Powers its Developers with Scalable Resources Made Easy Using CloudBolt

Faster time to value for developers means that Jamf customers have new features in their hands before having to request... -

Jul 02, 2019

Jul 02, 2019Extensibility to Deliver Apps Anywhere in the Era of Cloud

The race to build digital solutions that entice us with new experiences continues its momentum for ride sharing, home sharing,... -

Jun 18, 2019

Jun 18, 2019The Hidden Cost of Cloud Sprawl

The hidden cost of cloud sprawl is similar to unaccounted costs associated with tasks in our everyday lives, like shopping...

Load more