Kubernetes optimization aims to achieve two goals: improved application performance and maximum resource utilization efficiency. Both objectives complement each other in container orchestration strategies. However, the challenge remains for teams to maximize efficient resource utilization while controlling infrastructure costs.

Research by the Cloud Native Computing Foundation (CNCF) reveals that Kubernetes adoption has led to increased cloud spending for 49% of organizations, with 70% attributing overspending to overprovisioning. Kubernetes resource management is a challenge due to the distributed nature of Kubernetes, combined with varying workload requirements and infrastructure constraints.

Kubernetes resource management requires strategic planning, advanced tooling, and in-depth technical knowledge. This article covers cost reduction and performance tuning techniques for Kubernetes optimization.

Summary of Kubernetes optimization workflow

| Workflow phase | Key activities | Description |

|---|---|---|

| Discovery: Performance baselines | Establish 7-14 day measurement windows using kubectl top and metrics to capture representative workload patterns across CPU, memory, and cost dimensions. | Creates a data-driven foundation for optimization decisions by revealing actual resource usage patterns vs. allocated resources. |

| Analysis: Bottleneck identification | Analyze oversized container images, runtime misconfigurations, slow startup times, and infrastructure constraints using Docker history, kubectl events, and ML-powered pattern recognition. | Identifies high-impact optimization opportunities that are invisible to manual analysis, focusing efforts on areas with the maximum cost reduction potential. |

| Optimization: Implementation | Deploy HPA, VPA, and cluster autoscaling with custom metrics, advanced pod scheduling constraints, and system-level tuning for API server, networking, and storage performance. | Achieves automated resource rightsizing and intelligent scaling that adapts to workload patterns while maintaining application performance and availability. |

| Measurement: Impact validation | Execute performance regression testing, resource utilization analysis, and cost impact assessment using automated load tests and Prometheus queries to validate optimization outcomes. | Quantifies optimization success through measurable metrics like cost reduction percentages, performance improvements, and resource efficiency gains. |

| Continuous refinement | Implement closed-loop optimization systems that automatically detect workload pattern changes and adjust resource allocations using ML-powered analysis and bidimensional autoscaling. | Maintains optimal efficiency over time without manual intervention, typically delivering cost reductions while preserving application performance. |

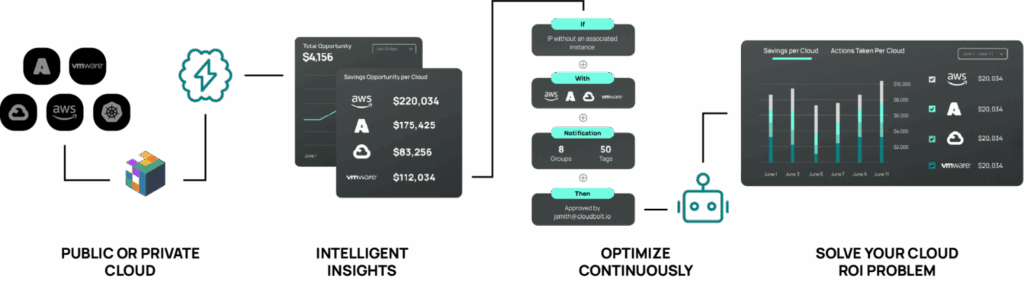

CloudBolt delivers continuous Kubernetes rightsizing at scale—so you eliminate overprovisioning, avoid SLO risks, and keep clusters efficient across environments.

Kubernetes resource management fundamentals

Kubernetes resource management operates through two primary mechanisms: resource requests and resource limits.

Resource requests

Resource requests define the minimum amount of CPU and memory a container needs to run, directly influencing pod scheduling decisions by the kube-scheduler. The scheduler uses these values to determine which nodes have sufficient available resources to accommodate new pods.

Resource limits

Resource limits establish the maximum amount of resources a container can consume, acting as hard constraints enforced by the kubelet and container runtime.

CPU resources are measured in millicores (m), where 1000m equals one CPU core. Memory resources are specified in bytes, with common units including Mi (mebibytes) and Gi (gibibytes).

Resource termination

When a container exceeds its memory limit, it receives an OOMKilled signal and gets terminated. CPU limits work differently. Containers that exceed CPU limits experience throttling, where the kernel restricts CPU time allocation based on the Completely Fair Scheduler (CFS) quotas.

resources:

requests:

memory: "256Mi"

cpu: "250m"

limits:

memory: "512Mi"

cpu: "500m"

Resource allocation lifecycle

The resource allocation lifecycle begins when the API server receives a pod specification containing resource requests. The scheduler evaluates all available nodes using predicates and priority functions, considering factors like resource availability, node affinity rules, and taints/tolerations.

The scheduler maintains a resource view of each node, tracking allocatable resources (total node resources minus system reservations) and current resource commitments from existing pods.

Once scheduled, the kubelet on the target node validates resource availability before accepting the pod. The container runtime (containerd, CRI-O, or Docker) creates cgroups to reserve resource requests, and enforce resource limits. Requests provide actual resource reservations while limits act as hard caps. For CPU, requests translate directly into CFS scheduler weight values giving containers proportional CPU time, while memory requests set /proc/pid/oom_score_adj values making the kernel less likely to kill processes during memory pressure.

The kubelet continuously monitors resource usage through cAdvisor, reporting metrics back to the metrics server. When pods terminate, their resource allocations are released, updating the node’s available resource calculations for future scheduling decisions.

QoS classes and their impact on the lifecycle

Kubernetes automatically assigns Quality of Service (QoS) classes to pods based on their resource specifications, creating a three-tier priority system for resource contention scenarios.

- Guaranteed

- Burstable

- BestEffort

These classes directly influence both scheduling priority and eviction order during resource-pressure situations. When thresholds are breached, it triggers eviction policies in the following order: BestEffort pods first, then Burstable pods that exceed their requests, and finally, Guaranteed pods only in severe scenarios.

Guaranteed QoS Class

Pods where all containers have identical CPU and memory requests and limits. These pods receive the highest scheduling priority and are the last to be evicted.

For example, the configuration below creates a pod where the container’s resource requests exactly match its limits, ensuring predictable resource allocation and maximum eviction protection.

# Guaranteed QoS - requests equal limits for all resources

apiVersion: v1

kind: Pod

metadata:

name: guaranteed-pod

spec:

containers:

- name: app

image: nginx

resources:

requests:

memory: "512Mi"

cpu: "250m"

limits:

memory: "512Mi"

cpu: "250m"

Burstable QoS Class

Pods with resource requests that differ from limits, or containers with only requests specified. These pods can consume resources beyond their requests up to their limits when available. During resource pressure, the kubelet evaluates these pods for eviction based on their resource usage relative to requests.

# Burstable QoS - requests differ from limits

apiVersion: v1

kind: Pod

metadata:

name: burstable-pod

spec:

containers:

- name: app

image: nginx

resources:

requests:

memory: "256Mi"

cpu: "125m"

limits:

memory: "512Mi"

cpu: "500m"

---

# Burstable QoS - only requests specified

apiVersion: v1

kind: Pod

metadata:

name: burstable-requests-only

spec:

containers:

- name: app

image: nginx

resources:

requests:

memory: "256Mi"

cpu: "125m"

The first example demonstrates a container that can burst from 256Mi/125m baseline up to 512Mi/500m limits when resources are available. The second example shows a requests-only configuration that can consume unlimited resources beyond the baseline reservation.

BestEffort QoS Class

Pods with no resource requests or limits specified. These pods receive the lowest scheduling priority and are evicted first when resource pressure occurs. They can consume any available node resources but provide no guarantees of availability.

The configuration below creates a pod with no resource constraints, allowing it to utilize any available node resources but providing no scheduling guarantees and incurring the highest eviction risk.

# BestEffort QoS - no requests or limits

apiVersion: v1

kind: Pod

metadata:

name: besteffort-pod

spec:

containers:

- name: app

image: nginx

# No resources block specified

Manual resource configuration challenges

Manual resource configuration often leads to anti-patterns that compromise both performance and efficiency.

Resource overprovisioning

Developers set resource requests and limits based on peak theoretical usage rather than actual workload patterns. This approach wastes cluster resources and increases infrastructure costs.

Mismatched CPU-to-memory ratios

Applications with different resource consumption patterns (e.g., CPU-intensive vs. memory-intensive) require distinct allocation strategies. Setting uniform resource ratios across diverse workloads leads to resource wastage and poor bin-packing efficiency across cluster nodes.

Misconfigured request-to-limit ratio

Request-to-limit ratio misconfiguration creates performance instability. Setting limits too close to requests prevents applications from handling traffic spikes, while excessive gaps between requests and limits can cause resource contention and unpredictable performance.

#1 Discovery: Establish Kubernetes performance baselines and metrics

Establishing accurate performance baselines requires systematic data collection across multiple dimensions of cluster behavior. Define measurement windows that capture representative workload patterns, typically spanning 7-14 days to account for weekly traffic variations and batch job cycles.

# Collect baseline CPU and memory data across all pods

kubectl top pods --all-namespaces --sort-by=cpu

kubectl top pods --all-namespaces --sort-by=memory

# Node-level resource utilization baseline

kubectl top nodes

# Get detailed resource requests vs limits across cluster

kubectl get pods --all-namespaces -o jsonpath='{range .items[*]}{.metadata.namespace}{"\t"}{.metadata.name}{range .spec.containers[*]}{"\t"}{.resources.requests.cpu}{"\t"}{.resources.limits.cpu}{"\t"}{.resources.requests.memory}{"\t"}{.resources.limits.memory}{end}{"\n"}{end}'

These kubectl top commands provide real-time snapshots of CPU and memory consumption across pods and nodes. The jsonpath command extracts configured resource requests and limits across all pods, comparing allocated and actual resource usage.

One key note is that benchmarks must account for resource utilization patterns at pod, node, and cluster levels. A proper baseline captures CPU and memory utilization percentiles (50th, 90th, 95th, 99th) rather than simple averages, as averages mask performance spikes that often drive overprovisioning decisions.

Key performance indicators

Kubernetes workload optimization requires monitoring KPIs across multiple infrastructure and application layers.

Resource efficiency KPIs

Include CPU utilization percentage, memory utilization percentage, and resource request accuracy (actual usage vs. requested resources). These metrics reveal overprovisioning patterns and identify opportunities for rightsizing.

Performance KPIs

These focus on application responsiveness and reliability. Pod startup time directly impacts scaling responsiveness and user experience during traffic spikes. Container restart frequency indicates resource constraint issues or application instability. Network latency between services affects overall application performance, particularly in microservices architectures.

Cost efficiency KPIs

They bridge the gap between technical metrics and business impact, providing granular insights into resource spending patterns through metrics such as cost per pod, cost per namespace, and cost per application.

Cluster health KPIs

They monitor the overall stability and capacity of the Kubernetes infrastructure. Node resource availability, pod scheduling success rate, and eviction frequency indicate the cluster’s capacity planning needs. API server response time and etcd performance metrics affect the overall cluster responsiveness.

Tools and methods for collecting performance data

The metrics-server provides basic CPU and memory metrics through the Kubernetes API, enabling kubectl top commands and horizontal pod autoscaling decisions.

Open source tools

Open source tools like Prometheus serve as the primary metrics collection and storage system for Kubernetes environments. Grafana visualizes collected metrics through customizable dashboards that display trends, alerting thresholds, and comparative analysis across periods.

For example, the following PromQL query calculates the percentage of time containers are being throttled due to CPU limits, indicating potential performance bottlenecks. The memory usage percentage query helps identify containers approaching their memory limits and at risk of OOMKill events.

# CPU throttling rate by container

rate(container_cpu_cfs_throttled_seconds_total[5m]) / rate(container_cpu_cfs_periods_total[5m])

# Memory usage percentage vs limits

(container_memory_working_set_bytes / container_spec_memory_limit_bytes) * 100

# Pod restart frequency

increase(kube_pod_container_status_restarts_total[1h])

Resource usage collectors (kubectl top, cAdvisor)

The kubectl top command provides immediate snapshots of resource usage for troubleshooting and quick analysis. In comparison, cAdvisor metrics provide granular insights into container behavior, including memory page faults, disk I/O operations, and network traffic patterns.

# Real-time resource monitoring commands

kubectl top pods --containers --sort-by=cpu

kubectl top pods --containers --sort-by=memory

# Node resource availability and pressure

kubectl describe nodes | grep -A 5 -B 5 "Allocated resources"

# Pod resource requests vs limits analysis

kubectl describe pods | grep -A 2 -B 2 "Limits:\|Requests:"

# Check for resource pressure and evictions

kubectl get events --field-selector type=Warning | grep -i "evict\|oom\|failed"

Above given kubectl top commands with --containers flag show resource usage at the individual container level, useful for identifying specific resource-hungry containers within multi-container pods. The describe and events commands help identify resource pressure situations and past eviction events that indicate sizing issues.

FinOps platforms

FinOps platforms offer cost visibility, correlating resource usage with financial impact across namespaces, teams, and applications. These platforms aggregate technical metrics with cost data, enabling organizations to identify high-impact optimization opportunities and track the economic benefits of resource efficiency improvements.

This practical FinOps playbook shows you exactly how to build visibility, enforce accountability, and automate rightsizing from day one.

#2 Analysis: Identifying Kubernetes optimization opportunities

Kubernetes optimization involves two primary steps.

- Application-level bottlenecks

- Infrastructure-level constraints

Application-level bottleneck analysis

This includes optimizing container images, resolving runtime configuration issues such as environment variables, and addressing slow startup times.

Identify oversized container images and inefficient layers

Container image optimization represents one of the highest-impact areas for cluster-wide performance improvement. Oversized images increase pod startup times from seconds to minutes, consume unnecessary storage across all nodes, and waste network bandwidth during deployments.

Common points include package managers leaving cache files, development tools in production images, and redundant file copies across layers.

For example, the docker history command below shows layer-by-layer size contributions to identify bloated layers, while the find command discovers large files that shouldn’t exist in production images.

# Analyze image layers to identify the largest contributors

docker history --human --format "table {{.CreatedBy}}\t{{.Size}}" myapp:latest

# Identify files over 10MB that shouldn't be in production

docker run --rm -it myapp:latest find / -type f -size +10M 2>/dev/null

Multi-stage builds reduce final image size by 60-80% by separating build dependencies from runtime requirements. This technique eliminates build tools, source code, and intermediate artifacts from production containers.

Analyzing runtime configuration inefficiencies

Runtime misconfigurations lead to substantial resource waste, particularly in JVM-based applications, where default settings often assume bare-metal deployments.

Java applications typically allocate heap memory based on host specifications rather than container limits, which can result in memory pressure and OutOfMemory (OOM) Kill events.

# Optimized JVM settings for containerized deployment

- name: JAVA_OPTS

value: "-XX:+UseG1GC -XX:MaxRAMPercentage=75.0 -XX:+UseContainerSupport"

Python applications suffer from memory fragmentation issues when default malloc settings create excessive memory arenas.

# Optimized python settings for containerized deployment

- name: MALLOC_ARENA_MAX

value: 2

- name: PYTHONUNBUFFERED

value: 1

- name: PYTHONMALLOC

value: malloc

Node.js applications often exhibit memory leaks in containerized environments because the default garbage collection thresholds are optimized for long-running server processes rather than the container lifecycle patterns.

# Optimized node settings for containerized deployment

- name: ENV

value: "--max-old-space-size --optimize-for-size --expose-gc"

Detect slow startup times and initialization bottlenecks

Pod startup delays directly impact the effectiveness of autoscaling and user experience during traffic spikes. Applications with 2-3 minute startup times prevent rapid scaling responses, forcing overprovisioning to maintain availability.

Common bottlenecks include:

- Database connection pool initialization

- Dependency injection framework setup

- Parsing large configuration files

The kubectl get events command below, with timestamp sorting, shows the pod lifecycle timeline to identify startup bottlenecks.

# Track pod lifecycle events to identify startup bottlenecks

kubectl get events --sort-by=.metadata.creationTimestamp | grep -E "Scheduled|Pulling|Started"

Startup probes prevent Kubernetes from killing pods during legitimate initialization periods, while readiness probes ensure traffic only reaches fully initialized containers. Proper probe configuration can reduce apparent startup times from minutes to seconds by preventing premature restarts.

Infrastructure component bottleneck analysis

This involves identifying node-level usage, network delays, storage IOPS bottlenecks, and performance diagnostics on control plane components.

Worker node resource utilization patterns

Node-level analysis reveals cluster-wide inefficiencies where individual pods appear properly configured but collectively create resource fragmentation. High allocation percentages with low actual utilization indicate systematic overprovisioning. Uneven pod distribution across nodes creates hotspots that trigger premature cluster scaling.

# Compare allocated vs available resources across all nodes

kubectl describe nodes | awk '/Name:|Allocated resources:/{print} /cpu|memory/{print}'

Resource fragmentation occurs when the remaining node capacity exists in unusable combinations, such as nodes with available CPU but insufficient memory, or vice versa. This fragmentation forces new pods onto additional nodes despite cluster-wide resource availability.

Network performance bottleneck identification

Network latency between pods affects microservices architectures where request chains span multiple services. CNI plugin overhead, service mesh proxy latency, and DNS resolution delays compound to create significant performance impacts. East-west traffic patterns often reveal inefficient pod placement across availability zones.

# Test inter-pod network latency

kubectl run netshoot --rm -i --tty --image nicolaka/netshoot -- ping service-name.namespace.svc.cluster.local

The above netshoot pod provides a network troubleshooting toolkit for testing inter-service connectivity and measuring network latency between pods. This containerized approach generates network diagnostics without requiring host-level access or tools.

Storage I/O performance analysis

Persistent volume performance bottlenecks emerge from storage class mismatches with workload requirements. Database workloads on network-attached storage often suffer from high latency due to random I/O operations. Log-heavy applications overwhelm storage IOPS limits, causing application timeouts and degraded user experience.

# Analyze persistent volume usage patterns

kubectl get pv -o custom-columns=NAME:.metadata.name,CAPACITY:.spec.capacity.storage,STORAGECLASS:.spec.storageClassName

Storage affinity misconfigurations place pods far from their persistent volumes, introducing unnecessary network latency for every disk operation. Local SSD storage can improve database performance compared to network storage, but requires careful pod placement strategies.

Control plane performance constraints

API server latency increases linearly with cluster size and object count. Excessive API calls from monitoring systems, CI/CD pipelines, and poorly configured controllers create performance bottlenecks that affect all cluster operations. Additionally, etcd performance degrades when disk I/O latency exceeds 10ms, causing cascading delays throughout the control plane.

The below query provides API server performance data for control plane analysis.

# Monitor API server response times

kubectl get --raw /metrics | grep apiserver_request_duration_seconds

It provides point-in-time snapshots of the clusters and resources. However, it can’t provide a detailed analysis of all the data required for complete monitoring. This manual analysis also lacks the predictive capabilities to recognize subtle patterns that develop over weeks or months.

Advanced optimization platforms, such as CloudBolt’s integrated StormForge solution can overcome these challenges. StormForge utilizes machine learning algorithms to automate the analysis of workload patterns across thousands of containers, highlighting optimization opportunities that are invisible to manual analysis.

#3 Kubernetes optimization: Implementation and configuration

There are three main approaches to Kubernetes resource optimization.

- Autoscaling

- Advanced pod scheduling

- System-level optimizations

Kubernetes autoscaling implementation

Horizontal pod autoscaling

HPA automatically adjusts pod replica counts based on observed CPU utilization, memory usage, or custom metrics. Configure HPA with appropriate target thresholds and scaling policies to prevent erratic behavior during traffic fluctuations.

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: web-app-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: web-app

minReplicas: 2

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70

behavior:

scaleDown:

stabilizationWindowSeconds: 300

This HPA configuration scales a deployment between 2 and 10 replicas when CPU usage exceeds 70%, with a 5-minute stabilization window to prevent rapid scaling oscillations.

Vertical pod autoscaling

VPA automatically updates container resource requests based on actual usage patterns. Use VPA in recommendation mode initially to analyze suggested changes before enabling automatic updates.

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: web-app-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: web-app

updatePolicy:

updateMode: "Off" # Start with recommendations only

resourcePolicy:

containerPolicies:

- containerName: app

maxAllowed:

cpu: "2"

memory: "4Gi"

This VPA runs in recommendation mode to analyze resource usage patterns without making automatic changes, with maximum limits set to prevent excessive resource allocation.

Cluster autoscaling

Cluster autoscaling automatically adjusts the number of nodes based on pending pod resource requirements. Configure node groups with appropriate scaling limits and instance types for different workload patterns.

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-autoscaler-status

namespace: kube-system

data:

nodes.max: "100"

scale-down-delay-after-add: "10m"

scale-down-unneeded-time: "10m"

skip-nodes-with-local-storage: "false"

This configuration sets cluster autoscaler parameters, including the maximum node count and timing delays for scale-down operations, to prevent unnecessary node cycling.

Custom metrics implementation and scaling thresholds

Custom metrics provide more accurate scaling signals than CPU and memory alone. Implement application-specific metrics, such as request queue length or database connection pool utilization, to make better autoscaling decisions.

# Custom metrics HPA using request rate

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

spec:

metrics:

- type: Pods

pods:

metric:

name: requests_per_second

target:

type: AverageValue

averageValue: "100"

This HPA configuration scales based on application-specific request rate metrics, triggering scaling when the average number of requests per second exceeds 100.

Advanced pod scheduling

For optimum performance, implement the following scheduling strategies

Pod topology spread constraints for workload distribution

Topology spread constraints ensure even pod distribution across failure domains, preventing resource hotspots and improving availability. Configure constraints to balance pods across zones, nodes, or custom topology domains.

spec:

topologySpreadConstraints:

- maxSkew: 1

topologyKey: kubernetes.io/hostname

whenUnsatisfiable: DoNotSchedule

labelSelector:

matchLabels:

app: web-app

This constraint ensures that pods are distributed evenly across nodes, with a maximum difference of one pod per node, preventing scheduling issues if the distribution becomes uneven.

Pod priority and preemption configurations

Priority classes enable scheduling preferences for critical workloads. When cluster resources are constrained, high-priority pods can preempt lower-priority pods, allowing SLA compliance for production services.

apiVersion: scheduling.k8s.io/v1

kind: PriorityClass

metadata:

name: production-priority

value: 1000

globalDefault: false

description: "Priority class for production workloads"

This priority class assigns a high priority value (1000) to production workloads, enabling them to preempt lower-priority pods during resource contention.

Advanced node affinity/anti-affinity techniques

Node affinity rules place pods on specific node types based on hardware requirements, while anti-affinity prevents resource contention by separating competing workloads across different nodes.

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: node-type

operator: In

values: ["compute-optimized"]

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchLabels:

app: database

topologyKey: kubernetes.io/hostname

This configuration places pods only on compute-optimized nodes while preferring to separate database pods across different hosts to avoid resource conflicts.

System-level optimizations

You can implement the below in addition to the techniques described above.

Control plane tuning

API server optimization reduces request latency through connection pooling and request prioritization. etcd tuning focuses on optimizing disk I/O performance and reducing network latency between cluster members.

# API server optimization flags

- --max-requests-inflight=400

- --max-mutating-requests-inflight=200

- --request-timeout=60s

- --enable-priority-and-fairness=true

These API server flags increase concurrent request limits and enable priority queuing to improve response times under heavy load conditions.

Network performance tuning

The selection and configuration of the CNI plugin directly impact network throughput and latency. DNS caching optimization reduces service discovery overhead, while service mesh configuration balances security features with performance requirements.

# CoreDNS optimization for faster lookups

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

data:

Corefile: |

.:53 {

cache 30

reload 5s

ready

}

This CoreDNS configuration enables 30-second DNS caching and 5-second config reload intervals to reduce lookup latency and improve service discovery performance.

Storage performance optimization

Storage class configuration aligns workload I/O patterns with the most suitable storage types. Local SSD storage classes provide high IOPS for databases, while network storage offers durability for stateless applications.

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: fast-ssd

provisioner: kubernetes.io/gce-pd

parameters:

type: pd-ssd

replication-type: none

reclaimPolicy: Delete

allowVolumeExpansion: true

This storage class creates high-performance SSD persistent volumes without replication, providing maximum I/O throughput and suitable for database workloads that require low-latency storage.

StormForge‘s ML-powered optimization automatically sets and adjusts resource requests based on predicted demand patterns, achieving enterprise-scale automation with minimal manual intervention while delivering measurable cost reductions and performance improvements.

#4 Measurement: evaluating performance and cost impact

Use the following techniques to measure Kubernetes optimization impact

Performance regression testing and monitoring

Performance regression testing validates that optimization changes don’t degrade application responsiveness or stability. Automated testing pipelines should include load testing, latency measurement, and resource usage verification after each deployment.

# Automated performance test script

kubectl run load-test --image=curlimages/curl --restart=Never -- sh -c "for i in {1..1000}; do curl -w 'time=%{time_total}s\n' -s http://web-app/api/health; done"

# Monitor response times during test

kubectl logs load-test | grep -o 'time=[0-9]*ms'

# Check for resource constraint indicators

kubectl get events | grep -E "OOMKilled|FailedScheduling|Evicted"

This load test script generates 1000 HTTP requests while monitoring response times and checking for resource-related failures that could indicate optimization-induced performance issues.

Post-optimization analysis

Post-optimization analysis compares actual resource usage against previous baselines to validate efficiency improvements. Track CPU and memory utilization percentiles, pod restart rates, and scheduling success metrics to quantify the impact of optimization.

# Compare resource usage before and after optimization

kubectl top pods --sort-by=memory | head -20

kubectl top nodes --sort-by=cpu

# Analyze resource request accuracy

kubectl get pods -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{range .spec.containers[*]}{.resources.requests.memory}{"\t"}{end}{range .status.containerStatuses[*]}{.restartCount}{"\t"}{end}{"\n"}{end}'

# Prometheus queries for optimization validation

# CPU utilization improvement

avg(rate(container_cpu_usage_seconds_total[5m])) by (pod)

# Memory efficiency gains

(container_memory_working_set_bytes / container_spec_memory_limit_bytes) * 100

These commands and queries provide immediate visibility into memory and CPU usage patterns, helping to identify whether optimizations achieve the expected resource efficiency gains.

Cost impact assessment and ROI calculation

Cost impact measurement requires correlating resource efficiency gains with actual reductions in infrastructure spending. Track metrics such as cost per pod, namespace spending patterns, and cluster utilization rates to calculate the ROI of optimization.

Cost-per-workload calculations should account for both compute costs (CPU, memory) and infrastructure overhead (networking, storage, control plane). Track these metrics before and after optimization to demonstrate financial impact.

Closed-loop optimization systems and continuous refinement

CloudBolt’s integrated solution creates closed-loop systems that continuously measure performance impact and refine optimization strategies based on real-world results. These systems automatically detect changes in workload patterns and adjust resource allocations accordingly, maintaining optimal efficiency without requiring manual intervention.

The airline analogy translates complex cluster economics into language your execs, engineers, and FinOps teams can all understand.

Conclusion

Kubernetes optimization requires systematic baseline measurement, gradual implementation, and continuous monitoring to achieve meaningful cost reductions and performance improvements. Start with VPA in recommendation mode to understand resource allocation inefficiencies, implement conservative HPA thresholds to prevent waste, and focus optimization efforts on high-impact areas identified through data analysis rather than applying generic configurations across all workloads.

Build optimization as an ongoing process with automated testing pipelines that validate performance after each change. Develop workload-specific strategies for different application types, create feedback loops between development and operations teams, and establish monitoring infrastructure before attempting large-scale optimization initiatives. Plan for gradual rollouts using canary deployments to prevent optimization-induced performance regressions.

For teams seeking automated ML-powered optimization solutions, CloudBolt’s integrated StormForge platform delivers comprehensive visibility, intelligence, and automation capabilities that manual approaches cannot match.

Related Blogs

What Teams Actually Need Before They’ll Let Right-Sizing Act in Production

Most Kubernetes teams know they’re overprovisioned. The dashboards show it. The recommendations confirm it. And in most environments, the list of workloads…