The Microsoft AI Cloud Partner Program provides managed service providers (MSPs) with access to training, technical support, and business development resources designed to help them integrate AI services into their existing service offerings. The challenge is that AI workloads behave differently from the virtual machines, databases, and applications that MSPs currently manage for clients, which makes AI services harder to staff, plan, price, and profit from than traditional managed services.

This article explores the Microsoft AI Cloud Partner Program and outlines key considerations for MSPs when developing AI practices. We cover the program structure, the types of AI services clients request, how to implement them successfully, and the operational realities of running AI workloads for multiple clients. The goal is to help you understand both the opportunity and the operational complexity involved in delivering AI as a managed service.

Summary of key Microsoft AI Cloud Partner Program concepts

| Component | Description |

|---|---|

| Program tiers and benefits | The program offers multiple partnership levels: Silver, Gold, and Solutions Partner. Their benefits include technical support, co-selling opportunities, and exclusive access to AI resources tailored to different MSP maturity levels. |

| AI specialization pathways | Structured certification tracks for Azure AI services, machine learning operations, and cognitive services enable MSPs to build verifiable expertise in specific AI domains relevant to enterprise client needs. |

| Partner Concierge Service | The Microsoft AI Cloud Partner Program Concierge provides dedicated advisory sessions for partners with active Core or Expanded benefits packages, or Solutions Partner designations. |

| Technical implementation framework | Microsoft provides comprehensive training materials, hands-on labs, architectural guidance, and best-practice documentation designed explicitly for MSPs managing AI workloads at scale. |

| Client engagement and service delivery | Program members receive dedicated technical assistance, solution blueprints, and implementation frameworks to help enterprise clients successfully deploy and optimize AI applications in production environments. At the same time, MSPs independently define how usage will be tracked, costs allocated, and services priced across tenants. |

| Marketplace and co-selling benefits | Partners gain access to Microsoft’s commercial marketplace, joint go-to-market strategies, as well as co-selling and reselling programs that connect MSPs with enterprises actively seeking AI implementation partners. |

| Ongoing management capabilities | The program includes tools and frameworks for monitoring AI workload performance, optimizing resource utilization, and maintaining compliance across hybrid cloud environments. At the same time, MSPs need independent systems for translating technical metrics into billable service consumption. |

Stop losing time (and margins) to spreadsheets and manual reconciliation. CloudBolt’s Cloud Billing Platform gives you accuracy, automation, and control at scale—so you can focus on growing your business, not fixing billing errors.

Program tiers and benefits

Partnership tiers and progression

The Microsoft AI Cloud Partner Program structures partnerships across multiple tiers. The Solutions Partner designation has replaced the legacy competencies (Gold and Silver partner levels), focusing on demonstrated customer success rather than purely technical certifications. MSPs earn Solutions Partner status by meeting specific requirements in one or more solution areas:

- Data and AI (Azure)

- Digital and app innovation (Azure)

- Infrastructure (Azure)

- Business applications

Each designation requires validated customer deployments and documented business impact.

Technical benefits by level

Technical benefits scale with partnership level. Entry-level partners receive access to Azure credits (ranging from $500 per month for entry-level to higher amounts) for internal development and testing, technical documentation, and community support forums. Higher tiers unlock dedicated technical account management, priority support channels, and private preview access to emerging AI services before they become generally available.

Business development support becomes more substantial as you advance through the program tiers. Microsoft field teams receive partner profiles and can directly refer qualified opportunities to MSPs with proven AI implementation track records. Co-selling arrangements allow joint pursuit of enterprise accounts, with Microsoft sales representatives actively positioning partner services alongside platform licenses.

Advanced partner capabilities

Access to exclusive AI tools differentiates higher partnership levels. Advanced partners receive early access to several specialized capabilities, such as the following:

- Azure OpenAI Service with priority capacity allocation for large language model implementations

- Custom vision training environments that accelerate computer vision model development

- Enhanced MLOps tooling for production model management and monitoring

- Dedicated technical resources for complex architectural challenges

These capabilities allow you to develop differentiated offerings before they become commoditized through broader market availability. However, priority allocation doesn’t guarantee immediate access; regional waitlists may still apply.

Enhanced Benefits Rolling Out February 2026

Microsoft is expanding partner benefits packages throughout February 2026, adding AI and security capabilities across all tiers. These additions target the operational challenges partners face when building AI practices and managing multi-tenant security at scale.

The expansion includes increased Copilot licensing, expanded security tooling, and developer resources that address the infrastructure gaps many partners encounter when scaling AI services. Partners with active Launch, Core, or Expanded packages receive these additions automatically at their next renewal, while current members gain access through retrofitting mechanisms for cloud-based benefits.

New additions across benefit tiers:

- Microsoft 365 Copilot seats – Additional licenses for Partner Success Expanded Benefits and Solutions Partner designations in Business Applications, Modern Work, and Security solution areas. The allocation includes Microsoft 365 Copilot for Sales, Finance, and Service variants tailored to vertical-specific workflows.

- Azure credits for Copilot Studio – Development credits for building custom AI agents and extending Copilot functionality. Partners building customer implementations can prototype and test agent architectures before deploying to production environments.

- Security Copilot integration – Included with Solutions Partner designations for Security solution areas. The offering provides AI-assisted threat hunting and incident response capabilities through the same Copilot interface partners already use for productivity workflows.

Additional benefits include Teams Premium and Teams Rooms Pro licenses, GitHub Copilot Enterprise seats, and Microsoft Defender for Endpoint licenses. The security-focused additions address the compliance and threat-detection requirements partners face when managing customer environments across hybrid infrastructure.

AI specialization pathways

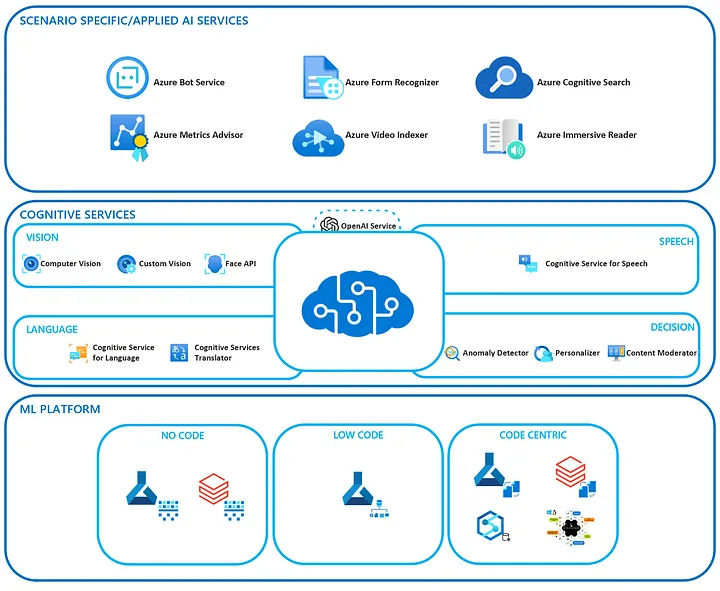

When it comes to AI-related areas, there are three distinct approaches for partners to plan their engagements. These involve leveraging Azure AI and ML services, integrating Cognitive Services, and implementing industry-specific AI solutions.

Azure AI and Machine Learning services

Azure AI and Machine Learning services form the foundation of most MSP AI offerings. Azure Machine Learning provides comprehensive end-to-end management of the machine learning lifecycle, encompassing data preparation, model deployment, and monitoring. MSPs typically position this service for clients building custom models specific to their business domains, such as fraud detection systems, predictive maintenance applications, or demand forecasting tools.

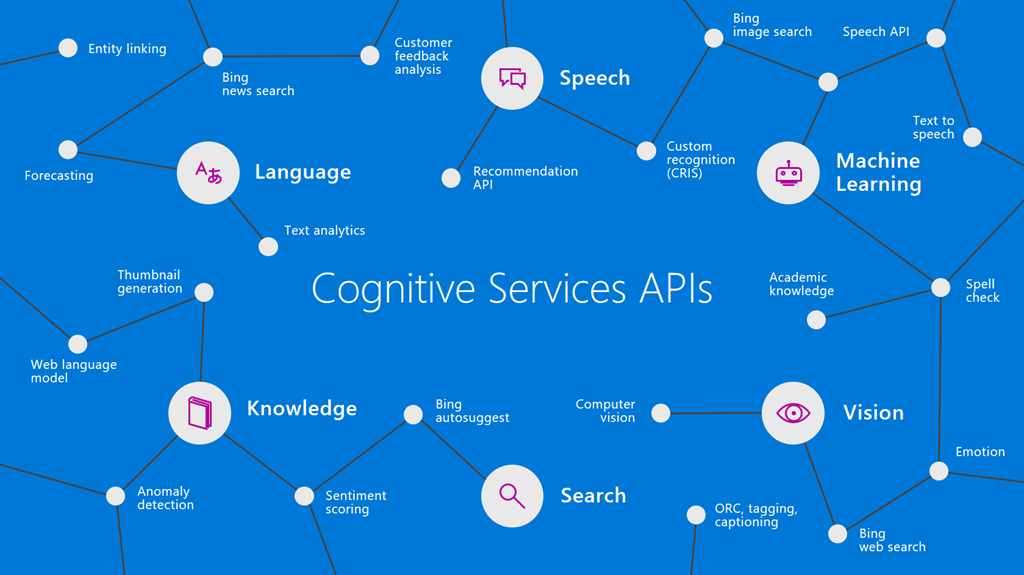

Cognitive Services integration

Cognitive Services integration addresses everyday AI needs without requiring data science expertise. These pre-built APIs handle tasks like document analysis, language translation, speech recognition, and computer vision. Pricing follows usage patterns, so Microsoft partners can expect the following ranges:

- Document analysis costs approximately $1.50 per 1,000 pages.

- Speech-to-text services cost around $1 per audio hour.

- Vision API calls typically range from $1-2 per 1,000 images.

MSPs often bundle cognitive services into existing application modernization engagements, adding AI capabilities to legacy systems without complete application rewrites.

AI-powered analytics and business intelligence extend traditional reporting with predictive and prescriptive capabilities. For example, Azure Synapse Analytics combines data warehousing with machine learning integration, allowing analysts to build forecasting models directly within their familiar BI workflows. MSPs supporting enterprise analytics teams find that this integration reduces the friction between data exploration and AI implementation.

CloudBolt helps MSPs and distributors automate billing from end to end, eliminating manual reconciliation and unlocking margin protection at scale.

Explore Reselling & Distribution Capabilities to see how.

Industry-specific AI solutions

Industry-specific AI solutions utilize pretrained models and reference architectures tailored to specific vertical markets. Developing expertise in one or two vertical markets enables MSPs to establish repeatable implementation patterns and gain differentiated industry knowledge. Here are some example industries:

- Healthcare: Azure Health Bot for patient engagement, medical imaging analysis services for radiology workflows, and FHIR-compatible data processing

- Financial services: Real-time anomaly detection for transaction monitoring, fraud prediction models, and regulatory compliance automation

- Manufacturing: Predictive maintenance using IoT sensor data and machine learning, quality control vision systems, and supply chain optimization

- Retail: Demand forecasting, customer behavior analysis, and personalized recommendation engines

Hybrid AI architectures spanning cloud and edge address scenarios where data locality, latency, or connectivity constraints prevent pure cloud implementations. Azure Stack Edge provides GPU-accelerated compute capabilities to remote locations, which enables local model inference while maintaining cloud connectivity for model updates and centralized monitoring. MSPs managing distributed environments increasingly encounter requirements for edge AI capabilities. That happens for verticals such as retail chains, manufacturing facilities, or remote field operations.

| Workload Type | Compute Pattern | Primary Location | Use Case Example |

|---|---|---|---|

| Model training | Burst-intensive, GPU-heavy | Cloud datacenter | Developing new fraud detection models |

| Real-time inference | Sustained, latency-sensitive | Edge or cloud | Manufacturing quality inspection |

| Batch inference | Scheduled, throughput-focused | Cloud datacenter | Overnight transaction analysis |

Partner Concierge Service

One-on-One Advisory Through Partner Concierge

The Microsoft AI Cloud Partner Program Concierge provides dedicated advisory sessions for partners with active Core or Expanded benefits packages, or Solutions Partner designations. Rather than navigating program documentation independently, partners work directly with specialists who understand their technical architecture and go-to-market strategy.

Concierge sessions address specific operational challenges: optimizing Marketplace listings for discovery, structuring co-sell engagements with Microsoft field teams, or accessing technical presales resources for complex customer deployments. The service bridges the gap between program eligibility and practical implementation.

Advisory areas partners access through Concierge:

- Marketplace optimization – Specialists review offer listings, pricing models, and transact-enablement to improve conversion rates. Sessions cover technical requirements for ISV app publishing, co-sell badge criteria, and integration with Microsoft’s procurement workflows.

- Co-sell pipeline development – Guidance on referral submissions, BANT criteria validation, and Microsoft seller engagement. Partners learn how to structure opportunities for field collaboration and access Microsoft’s customer intelligence through SPARK propensity data.

- Technical presales support – Access to architecture reviews, proof-of-concept guidance, and deployment planning for customer engagements. Particularly valuable when partners need Microsoft-specific technical validation before committing to implementation timelines.

Access Partner Concierge through Partner Center’s navigation menu under the Concierge option. Schedule consultations directly or request async guidance on specific program questions. Partners without current access can purchase Partner Success Core Benefits ($4,795 annually) or Partner Success Expanded Benefits ($14,995 annually) to activate the service.

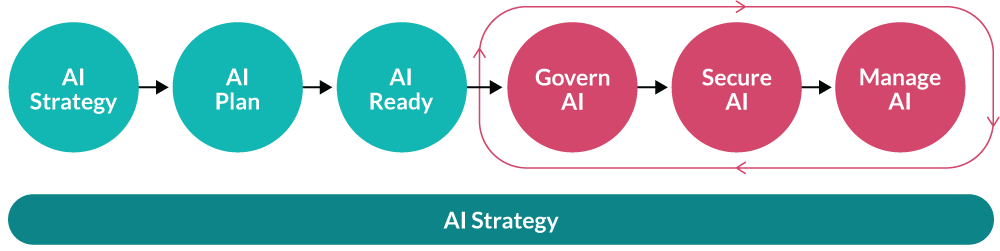

Technical implementation framework

The Azure Cloud Adoption Framework provides a structured methodology for implementing AI workloads that MSPs can adapt for client engagements. This framework moves through distinct phases: Strategy, Plan, Ready, and Adopt. Each phase addresses specific technical and organizational requirements for the successful deployment of AI.

Strategy and Planning phases

AI strategy development begins with the identification and prioritization of use cases. Strategic prioritization ensures that you focus resources on projects that deliver maximum value while aligning with your organizational capabilities. Typically, MSPs evaluate each potential AI implementation against three key criteria: business value alignment, technical feasibility with available data, and resource requirements in relation to client capabilities.

Infrastructure capacity constrains AI project scope and influences deployment strategies. Assess the current environment against AI workload requirements:

- Compute resources: GPU availability for training and inference, with consideration for Azure virtual machines with GPU support or managed Azure Machine Learning compute

- Storage capacity: High-throughput storage systems capable of handling training data pipelines and model artifacts

- Network bandwidth: Sufficient capacity for distributed training scenarios and real-time inference traffic

- Security controls: Data governance, access management, and compliance frameworks aligned with AI-specific requirements

Different AI solutions have characteristic implementation timelines that vary based on organizational readiness and project scope. For example, Microsoft Copilot implementations typically deliver value within days to weeks, while custom Azure AI workloads require several weeks to months to reach production readiness. Be sure to build 20-30% buffer time into estimates to account for data quality issues, model iteration cycles, and integration challenges.

AI Readiness phase

The AI Readiness phase focuses on establishing the technical foundation for AI workloads. An Azure landing zone is the recommended starting point that prepares your Azure environment with a predefined setup for platform and application resources. MSPs managing multiple clients should establish separate landing zones for each client to maintain governance boundaries and isolate costs.

Resource organization follows management group hierarchies:

- Ensure management group separation to establish critical data governance boundaries between external and internal AI applications.

- Deploy AI resources within workload-specific subscriptions rather than shared platform subscriptions.

- Maintain policy inheritance while enabling team autonomy.

Network architecture and regional deployment

Network architecture for AI workloads requires different patterns than traditional applications. AI reliability requires strategic region placement and redundancy planning to ensure consistent performance and high availability. Deploy AI endpoints across multiple regions for production workloads serving critical business functions. Consider data locality requirements—model training data often needs to remain in specific geographic regions for compliance reasons.

Adoption phase

The adoption phase transforms planning into production-ready AI systems through a structured implementation roadmap. Success requires making informed decisions about deployment architecture, integration patterns, and resource allocation before entering detailed implementation phases.

Architecture patterns determine how AI workloads will serve predictions to end users and business systems, as shown in the following table.

| Pattern Type | Latency Requirements | Use Case Examples |

|---|---|---|

| Batch inference | Hours to overnight acceptable | Fraud scoring, sentiment analysis, and daily reporting |

| Real-time inference | Sub-second response needed | Chatbots, recommendation engines, and quality control |

These architectural choices directly impact the infrastructure requirements during environment setup and influence the complexity of production deployment.

Integration approaches define how AI capabilities connect with existing business processes. API-based patterns create loosely coupled architectures where AI services operate independently and return predictions to calling applications, making them easier to test and deploy incrementally. Embedded patterns incorporate model inference directly into application logic, making them suitable for scenarios that require extremely low latency or offline operation; however, they increase integration testing requirements during deployment phases.

Resource planning accounts for the distinct consumption patterns of AI workloads. Training workloads exhibit bursty consumption, characterized by periods of intense GPU utilization followed by relative quiet during model evaluation. Inference workloads typically require sustained capacity but consume fewer resources than training. Plan for experimentation phases where data scientists iterate rapidly on model designs, particularly during the model development phase when multiple training cycles occur in rapid succession.

The implementation roadmap below outlines typical timeline estimates for each phase, helping MSPs set realistic client expectations and allocate resources effectively across concurrent projects.

| Implementation Phase | Timeline Estimate | Key Activities |

|---|---|---|

| Use case validation | 1-2 weeks | Business value assessment, data availability review, and feasibility analysis |

| Environment setup | 2-4 weeks | Landing zone configuration, network architecture, policy implementation |

| Data preparation | 4-8 weeks | Data cleaning, feature engineering, and labelling for supervised learning |

| Model development | 3-6 weeks | Training iterations, evaluation, and hyperparameter tuning |

| Production deployment | 2-4 weeks | Integration testing, monitoring setup, and user acceptance |

Client engagement and service delivery

As MSPs achieve a specific partnership tier and establish a technical foundation, they typically need to develop a customer engagement and service delivery strategy. That includes creating a portfolio of AI service offerings, planning discovery and pre-sales engagements, and establishing financial parameters.

Developing AI service offerings

Developing AI service offerings for enterprise clients requires striking a balance between flexibility and standardization. Offering completely custom AI solutions for every client creates unsustainable engineering overhead and prevents knowledge reuse across engagements. Conversely, rigid productized offerings fail to address unique client requirements and competitive differentiators.

Successful MSPs develop tiered service frameworks that combine repeatable components with customization points. A typical structure includes three service levels:

- Foundation tier: Prebuilt cognitive services integration (document analysis, speech recognition, translation) with standard implementation patterns and fixed-scope delivery

- Custom tier: Tailored machine learning models for specific business domains (fraud detection, demand forecasting, predictive maintenance) with phased development and outcome-based pricing

- Enterprise tier: Complex AI platforms with ongoing model management, retraining services, and dedicated support resources

Structure service catalogs around business outcomes rather than technical capabilities. “Customer churn prediction” resonates more with buyers than “binary classification models.” Include implementation complexity estimates, typical timeline ranges, and prerequisite requirements, such as minimum training data volumes, in each service description.

Discovery and pre-sales engagement

Commercial success with AI services depends on effective discovery processes that qualify opportunities and set realistic expectations. At this stage, MSPs typically design various maturity assessment offerings, including:

- AI readiness assessments

- Educational workshops

- Leveraging the jump-start programs that Microsoft sometimes offers

Data readiness validation

Another critical component is assessing data readiness during the discovery phase. Clients often overestimate their data quality and availability. MSPs have to validate that sufficient historical data exists, that it’s accessible in usable formats, and that it contains the signals necessary for the AI use case being discussed. Many promising AI projects fail because critical data doesn’t exist or requires prohibitive effort to prepare.

Establishing financial parameters

Beyond technical implementation timelines, MSPs must estimate the total cost of ownership (TCO) of the AI solutions implemented and hosted on Azure. First, partners should calculate the ballpark cloud cost of the infrastructure for the first year of usage. Second, they should define how further usage will be tracked, costs allocated, and services priced across tenants from the outset.

Additionally, AI workloads introduce billing complexity that differs from traditional cloud services. This includes the distinction between GPU hours consumed during training versus inference, data storage volumes that fluctuate with model development cycles, and API call patterns that vary unpredictably with application usage.

Such FinOps and cost governance activities create substantial operational overhead for MSP teams, primarily when serving multiple customers. This is where CloudBolt’s Cloud Management capabilities come to the scene. The Total Cost Spend dashboard addresses this complexity by providing unified visibility into resource consumption across all client environments, automatically categorizing AI workload costs by type and tenant.

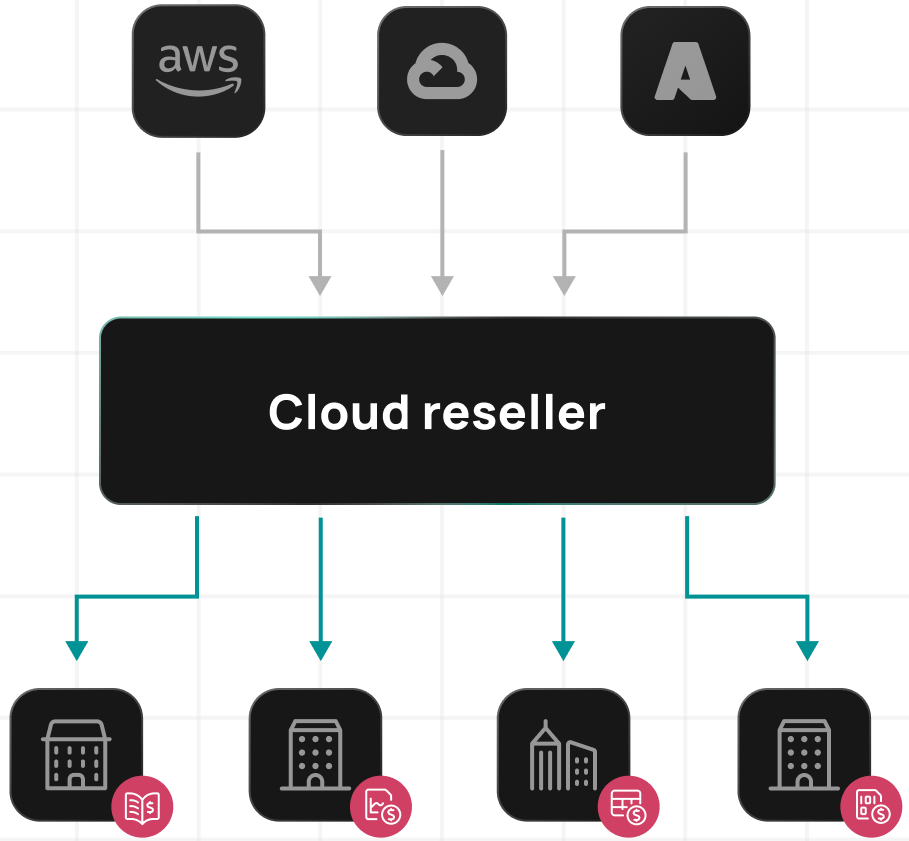

Marketplace and co-selling/reselling opportunities

In addition to a technical foundation and joint client engagement activities, Microsoft proposes several more effective go-to-market strategies, including leveraging Azure Marketplace to list MSP offerings, arranging joint co-selling engagements, and implementing cloud resell programs. Let’s look closely at them.

Azure Marketplace

Microsoft’s commercial marketplace provides partners with a transactional platform for listing AI service offerings alongside their Azure usage. Program members can publish consulting services, managed applications, and SaaS solutions directly to the Azure Marketplace, where enterprise buyers actively search for AI implementation partners.

Marketplace listings benefit from integrated billing through Azure invoices, reducing procurement friction for clients who prefer consolidated cloud spending. The marketplace also provides visibility into buyer intent data and engagement analytics that help refine service positioning and identify high-potential opportunities.

Co-selling opportunities

Co-selling programs formalize the partnership between MSP sales teams and Microsoft account executives pursuing enterprise opportunities. When Microsoft identifies accounts with AI requirements beyond standard platform services, they can directly engage partner resources for implementation and ongoing management.

Co-sell engagements often involve joint solution proposals, shared deal registration, and coordinated account planning. For qualified opportunities, Microsoft may provide sales support, technical pre-sales resources, and deal incentives that improve partner win rates and expand deal sizes. Advanced partners receive priority routing for AI-related referrals and access to dedicated partner development managers who facilitate customer introductions.

Cloud resell opportunities

Cloud service provider (CSP) reselling arrangements enable MSPs to bill clients directly for Azure consumption while maintaining control over margins and customer relationships. However, managing AI workloads across multiple client tenants introduces operational complexity that traditional CSP tools don’t adequately address.

MSPs need visibility into resource utilization patterns across customers to identify optimization opportunities, enforce consistent governance policies across disparate environments, and accurately allocate shared platform costs when clients consume services from a common infrastructure.

CloudBolt’s cloud billing platform helps MSPs manage these multi-tenant complexities by providing unified visibility, policy enforcement, and cost optimization across all client environments. This way, partners can manage resell subtenants effectively, reconcile bills automatically, and protect margins.

Operational challenges and solutions

Managing resource volatility

Managing complex AI workload resource requirements creates planning challenges for MSPs accustomed to more predictable infrastructure consumption patterns. AI training jobs may suddenly require dozens of GPU-enabled virtual machines for days or weeks, then release all resources when training is complete. Inference workloads scale based on application usage but may require a minimum capacity for acceptable response times even during low-traffic periods.

| Challenge | Traditional Approach | AI-Optimized Solution |

|---|---|---|

| Training workload bursts | Reserved instance commitments | Spot pricing with fault tolerance, offering 70-90% savings (but jobs may be interrupted) |

| Inference capacity planning | Fixed provisioning | Autoscaling with minimum thresholds |

| Multi-tenant GPU sharing | Dedicated instances | Container-based GPU partitioning |

Cost optimization strategies

Optimizing costs for compute-intensive AI operations requires different strategies than traditional application hosting. Reserved instance commitments are not well-suited for experimental workloads with unpredictable timing and duration. Spot instance pricing offers substantial savings for fault-tolerant training jobs that can tolerate interruptions. Batch inference jobs scheduled during off-peak hours to consume capacity that would otherwise sit idle.

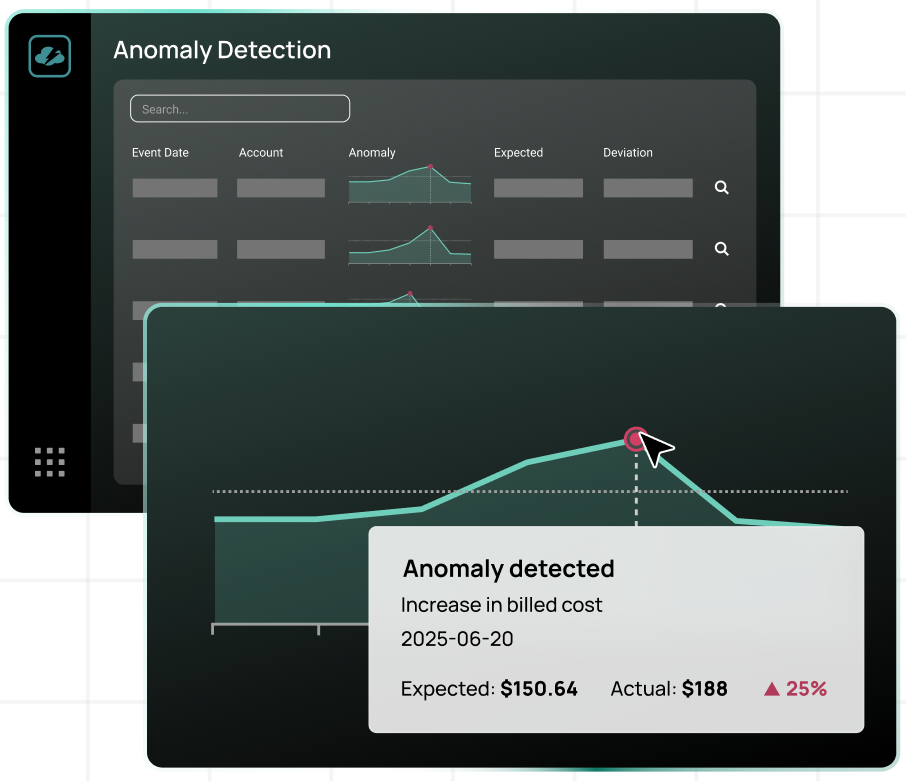

This is where CloudBolt’s Anomaly Detection can be particularly helpful. Once enabled, AI continuously learns about your environment, flags unusual spikes in real-time, and can trigger automated responses before the cost hits your books. This allows you to forecast with confidence using machine learning models tuned to unique patterns of each cloud environment.

Security and privacy considerations

Data security and privacy considerations become more complex when AI applications process sensitive information. Training data must remain protected throughout the machine learning pipeline—during storage, preprocessing, and model training. Inference requests may contain sensitive personal information that requires encryption and access controls. Model parameters sometimes encode sensitive patterns from training data, necessitating careful management of model artifacts.

Best practices for MSPs

Build comprehensive AI service catalogs

Building comprehensive AI service catalogs helps clients understand available capabilities and appropriate use cases for AI augmentation. Structure catalogs around business outcomes rather than technical capabilities. Include implementation complexity estimates, typical timeline ranges, and prerequisite requirements, such as minimum training data volumes.

Develop repeatable deployment methodologies

Developing repeatable deployment methodologies accelerates project delivery and improves consistency across client engagements. Document architecture patterns for common scenarios:

- Document analysis pipelines with OCR, entity extraction, and classification stages

- Predictive maintenance workflows connecting IoT sensors to forecasting models

- Customer service chatbot implementations using natural language understanding

Create template infrastructure definitions using tools like Terraform or Azure Resource Manager templates. Build reusable data preprocessing modules that handle everyday tasks, such as data cleaning, feature engineering, and train-test splitting. Pair these repeatable deployment patterns with repeatable billing models to achieve profitability at scale. Standardized service tiers with clear usage metrics prevent revenue erosion when technical delivery outpaces financial operations.

Create practical training and handoff processes

Creating practical client training and handoff processes helps keep AI systems valuable after initial implementation. Business users need training on interpreting model outputs, understanding confidence scores, and recognizing when to override automated predictions. Technical operators require knowledge of monitoring dashboards, alert responses, and retraining procedures. Executive stakeholders benefit from reporting on the AI system’s business impact and ROI metrics.

Establish monitoring and alerting

Establishing monitoring and alerting for AI applications requires instrumentation beyond standard infrastructure observability:

- Track model performance metrics (such as accuracy, precision, and recall) over time to detect any degradation.

- Monitor data distribution statistics to identify drift, indicating when retraining becomes necessary.

- Set up alerting for inference latency spikes that might indicate resource constraints or integration bottlenecks.

Plan for technology evolution

Planning for technology evolution and platform updates helps organizations prevent the accumulation of technical debt as AI services mature. Microsoft regularly updates Azure AI services with new capabilities and improved performance. MSPs have to plan regular reviews of deployed solutions against current best practices to comply with these standards. Budget for occasional refactoring as frameworks evolve and better approaches emerge. Maintain test environments where you can evaluate updates before deploying them to production.

Balance automation with human expertise

Balancing automation with human expertise in AI management is crucial for determining operational efficiency and service quality. Automated pipelines should handle routine tasks, such as scheduled model retraining, collecting performance metrics, and standard deployment workflows. Human expertise remains necessary for architecture decisions, troubleshooting unusual failures, and evaluating model outputs for business appropriateness. Define clear escalation paths, from automated systems to skilled practitioners.

The MSP landscape is shifting fast. Hear experts discuss how to deliver what customers want most: better cloud spend optimization, automation, and multi-cloud flexibility.

Conclusion

The Microsoft AI Cloud Partner Program offers MSPs a structured pathway to develop AI service capabilities that address the growing demand for intelligent applications among enterprises. The program’s tiered benefits align with MSP maturity levels, enabling the progressive development of expertise and market positioning as your organization expands its AI offerings.

As AI adoption accelerates across enterprise segments, MSPs possessing proven implementation capabilities and operational excellence will differentiate themselves in competitive markets. The program offers the foundation; MSPs’ investment in people, processes, and practical experience determines whether that foundation supports sustainable growth in the AI services market.

Related Blogs

How to get Slack notifications when StormForge applies recommendations

The StormForge Applier does its job quietly. It watches for recommendations, applies patches to your workloads, and moves on—no fanfare,…

When Hardware Triples in Price, Idle Capacity Becomes a Line Item.

A platform leader at a Fortune 50 company recently told his app teams something that I keep thinking about. The message was very…

StormForge vs ScaleOps: A Technical Comparison of Kubernetes Rightsizing Approaches

StormForge and ScaleOps both automate Kubernetes resource optimization, but they take meaningfully different approaches to how much control you hand over and when. This page walks through the differences in architecture, automation model,…