As businesses embrace the public cloud for their infrastructure needs, balancing cost efficiency and optimal performance is essential. Many organizations need help with the challenge of allocating the right amount of resources to their EC2 instances. When not handled correctly, under-provisioned resources often lead to a poor user experience and loss of business due to disappointed clients, while over-provisioning resources results in unnecessary costs and reduced profit.

EC2 sizing is a strategic approach to match the resources of your EC2 instances to your application’s requirements. You can reduce costs significantly by aligning your EC2 instances with resource demands while ensuring optimal performance.

This article discusses the concept of correct EC2 sizing, explores its benefits, and provides guidance on best practices to implement it effectively.

Best practices in EC2 sizing

We give a summary of EC2 sizing best practices below.

| Best Practices | Description |

| Choose appropriate instance types | Instance types offer a comprehensive combination of hardware configurations. Choose one relevant to your workload. |

| Monitor resource utilization | Use monitoring tools to ensure hardware is not over-provisioned |

| Scale automatically | Scaling instances out can be more effective than having fewer, higher specced instances. |

| Reserve Persistent Instances | A long-term commitment to using an instance offers savings. |

| Use Spot Instances for interruptible workloads. | Bidding for spot instances also proves cost-effective for batch processing or other stateless and fault-tolerant workloads. |

| Consider burstable performance instances for varied workloads. | Burstable instances provide a stable baseline performance, suitable for general-purpose workloads, and can burst the performance for short periods when necessary. |

| Choose the appropriate storage type. | Like instance types, various storage types are also available to attach to an instance. Choosing the right type for your workload is essential to running cost-effectively. |

| Regularly review and optimize | Regularly monitor and optimize your infrastructure to remain cost-effective even as workloads and circumstances change. |

#1 Choose appropriate instance types

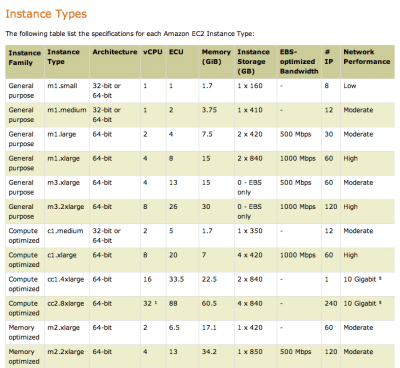

AWS offers various instance types, each tailored to specific workloads and use cases. At the time of writing, in the zone US-East-1 alone, there are over 440 instance types that cost anything between $0 (t3.micro: 2vCPU and 1 GiB Memory with Amazon Linux on free-tier ) and over $92 per hour (r6a.48xlarge: 192vCPU and 1536 GiB Memory with Windows Server and SQL Enterprise included). You can find the complete list of currently available instance types here.

Choosing the correct EC2 instance type is a critical decision that significantly impacts both performance and cost efficiency. To select the most appropriate instance type, identify your workload’s characteristics, such as compute intensity, memory utilization, and I/O patterns. You should also consider storage capacity, performance requirements, and networking capabilities alongside specialized features like GPU support to avoid unnecessarily high costs. For example, if the machine is a basic bastion jump-box, you would not provision a Windows Server with a SQL Enterprise license and a dedicated GPU, as this would be an unnecessarily expensive waste of resources.

AWS’s native tool, Amazon Q, recommends an instance based on a natural language description of your use case. However, it is unlikely to suggest the most efficient instance type on the first attempt. For example, if your description refers to deep learning inference, Q may suggest the inf2 instance type. However, even the inf2 family has specifications ranging from 4vCPU and 16GiB Memory to 192vCPU and 768GiB Memory. Further consideration is required to choose the most suitable instance type for your use case.

#2 Monitor resource utilization

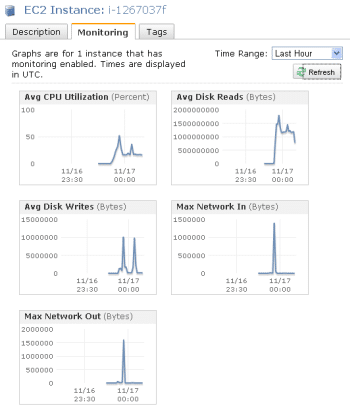

It is challenging to determine exactly what resources your applications will need before deployment. You could make an educated guess on resourcing requirements based on existing or predicted customer levels. However, at the start, service performance concerns often lead to over-provisioning, while cost concerns can result in the opposite. Hence, monitoring AWS EC2 resource utilization is crucial for optimizing performance, identifying bottlenecks, and managing costs effectively.

Setting up alarms and thresholds on EC2 performance metrics, such as CPU utilization, network throughput, disk I/O, and memory usage, helps you receive notifications when resource utilization exceeds predefined limits. You can then adjust EC2 sizing accordingly.

For example, let’s say you provision a single m7g large instance with 2vCPU and 8GiB Memory at $0.0816. However, your resource utilization graphs show that you never exceed 50% CPU or memory usage. You could then replace the instance with a single m7g.medium instance (1vCPU and 4GiB Memory at $0.0408 per hour) for a cost saving of 50%.

Historical data and insights also enable you to analyze trends, identify workload patterns, and make informed decisions about scaling and right-sizing your EC2 instances over more extended periods. You can identify peaks and troughs in demand that suggest ideal times to change the amount of resources you use.

Third-party tools like CloudBolt provide recommendations and insights that exceed AWS’s native offerings and automatically implement cost-efficiency improvements. This extra visibility into your EC2 resource utilization and automated responses allow you to make data-driven decisions to optimize your AWS infrastructure further.

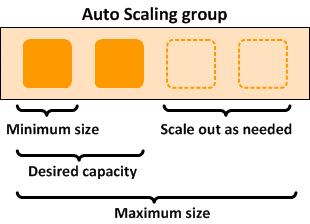

#3 Scale automatically

EC2 Auto Scaling automatically adjusts the number of EC2 instances available in response to changing workload demands. It ensures you have the suitable capacity at the right time and optimizes cost efficiency. You can define scaling policies based on

- CPU utilization

- Network traffic

- Custom application metrics.

EC2 Auto Scaling automatically launches new instances or terminates existing ones based on your defined minimums and maximums. When correctly configured, the elasticity ensures that your application handles sudden spikes in traffic and scales down during periods of low demand while maintaining high availability.

To appreciate the cost implications of auto-scaling, consider this example of running an application that requires 2vCPU and 8GiB Memory during its peak usage times, which account for 25% of a day:

| Instance Type | Specification | Cost per hour | Cost to fulfill requirements per day |

| m7g.medium | 1vCPU4GiB Memory | $0.0408 | ($0.0408 x 24) + ($0.0408 x (24*0.25)) = $0.9792 |

| m7g large | 2vCPU8GiB Memory | $0.0816 | 2x($0.0816 x24) = $1.9584 |

Provisioning one m7g.medium at all times and a second m7g.medium during the 25% of the day that constitutes peak usage is cheaper than running a single m7g large at all times. The medium instances still allow your application to run effectively during higher usage times.

#4 Reserve persistent instances

Reserved Instances (RIs) allow you to commit to a specific instance configuration for significant cost savings of up to 72%. You can reserve EC2 instance capacity for one or three years, ensuring a discounted hourly rate compared to On-Demand instances. As the name suggests, reserved instances reserve access to a particular machine type within a specific Availability Zone(AZ). If demand within an AZ spikes for any reason, you can always start that particular reserved instance.

RIs come in three types, each offering different flexibility and pricing options.

- Standard RIs

- Convertible RIs

- Scheduled RIs

Standard RIs offer the highest savings but limited flexibility, while Convertible RIs allow you to modify instance attributes at a slightly lower discount. Scheduled RIs are suitable for workloads with specific time windows.

AWS Savings plans are another viable alternative to Reserved Instances. It also involves a long-term spending commitment but, in exchange for a higher discount level, does not guarantee the availability of any specific instance.

With careful planning and analysis of your workload patterns, you can effectively leverage RIs to maximize the value of your AWS infrastructure. Returning to our earlier example, using a reserved instance for the m7g.medium instance that would always be available while accepting on-demand pricing for the second instance that only scales online when demand dictates is cheaper than paying on-demand pricing for both instances.

Challenges in RI commitment

Although Reserved Instance and Savings Plan savings can trickle from a master billing account to discreet sub-accounts, they can be complex to manage without the correct reporting and tools to provide the required usage visibility. Establishing which instances warrant a commitment in a relatively small environment with a single AWS account can be challenging. This becomes more complex as infrastructure expands, additional AWS accounts are created, and management of different parts of the infrastructure is dispersed.

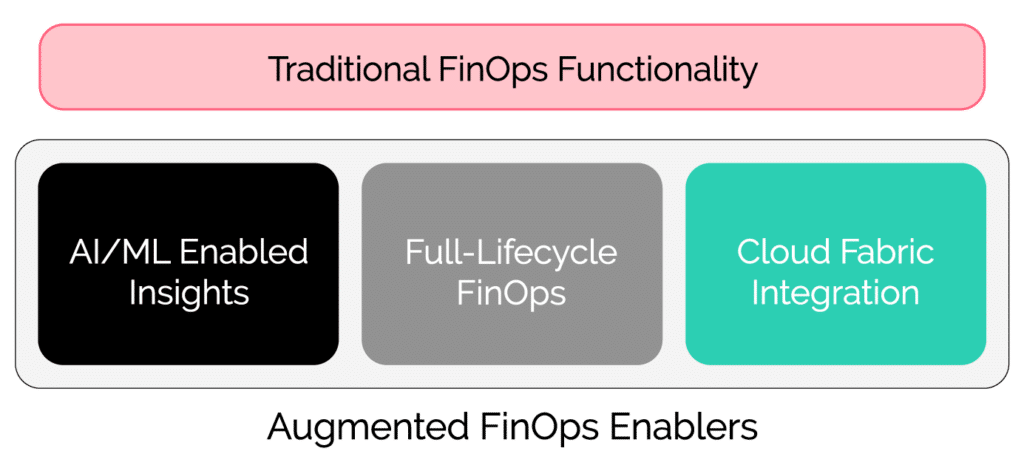

Third-party tools such as Cloudbolt provide controls to model and distribute reservation savings across AWS accounts and enable shared savings across all stakeholders. CloudBolt’s augmented FinOps framework also integrates financial data with business performance metrics to guide Reserved Instances investment decisions. For example, use cost per core and cost per GB RAM to decide commitment volumes.

#5 Use Spot Instances for interruptible workloads

Spot Instances allow you to bid on AWS’ unused EC2 capacity and take advantage of significantly lower prices than On-Demand costs. You may save up to 90%, without compromising performance or reducing availability.

The price for spot instances fluctuates based on supply and demand in the AWS market. Your instances may be interrupted if the AWS spot price exceeds your bid price. You can, of course, update your bid price in reaction to any change in spot price if you need to ensure spot instances remain available.

Spot instances most suitable for fault-tolerant and scalable workloads or those with flexible start and end times. More specifically, they are well-suited for

- Batch processing workloads that can be divided into smaller, independent tasks, like data processing, rendering, video transcoding, and large-scale analytics.

- Fault-tolerant applications capable of handling interruptions.

- Web applications with auto-scaling, containers, and microservices architectures.

- Short duration testing and development environments like for experimenting, or running integration tests.

- Big data processing workloads that distribute the processing across multiple instances using parallelization. They handle interruptions by restarting failed tasks.

- CI/CD Pipelines that involve short-lived instances for building, testing, and deploying applications.

- Features like Spot Instance hibernation and Spot Blocks also let you run some stateful workloads on Spot Instances.

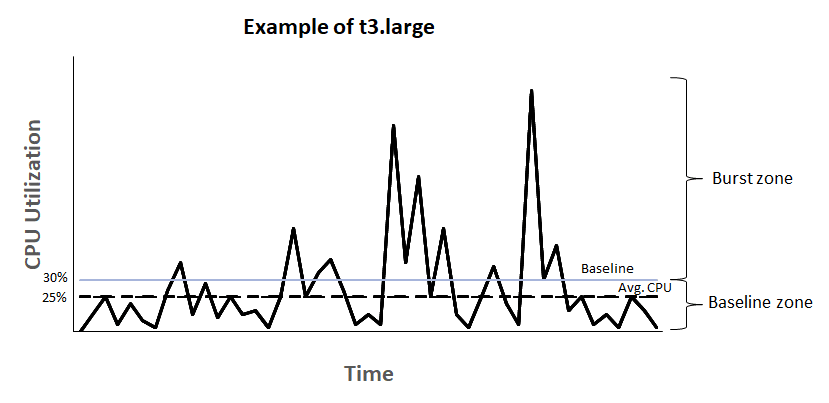

#6 Consider burstable performance instances for varied workloads

Burstable instances are an EC2 instance type designed for workloads with varying or fluctuating performance requirements. They provide a baseline level of CPU performance and accumulate CPU credits when the workload is below the baseline. You can use these credits during “burst” or high-demand periods to achieve higher CPU performance.

Two types of burstable instances are available, and each one behaves differently when the credit balance reaches zero.

- Standard burstable instances cannot burst while the credit balance is zero, limiting the machine’s performance to the baseline level.

- Unlimited burstable instances continue to burst as usual, but the AWS account is charged for purchasing any burst credits needed to sustain the required performance levels.

Both types of instances generally cost less than an equivalent specification machine, as the full capability of the machine is only available when bursting is enabled and burst credits are being depleted.

This burstable capability makes them suitable for applications that do not require sustained high CPU use but occasionally need to handle intensive tasks—for example, small-scale applications, development environments, and low-traffic websites. However, monitoring the CPUCreditBalance metric is essential to avoid bill shock.

#7 Choose the appropriate storage type

Choosing the right EC2 storage type is crucial to EC2 sizing. AWS offers multiple storage options for EC2 instances, each designed to serve specific use cases.

Elastic Block Store

Elastic Block Store (EBS) provides block-level storage volumes that can be attached to EC2 instances. It offers various types, such as General Purpose SSD (gp2), Provisioned IOPS SSD (io1), Throughput Optimized HDD (st1), and Cold HDD (sc1). The choice depends on factors like I/O performance needs, latency sensitivity, and cost considerations.

Elastic File System

Elastic File System (EFS) provides scalable and shared file storage for EC2 instances. It is suitable for workloads that require concurrent access from multiple instances.

Simple Storage Service

S3 offers object storage that is highly durable, scalable, and cost-effective. It is ideal for storing large amounts of data that don’t require frequent access from EC2 instances

Amazon Instance Store.

Amazon Instance Store provides temporary, high-performance block-level storage directly attached to EC2 instances. It is suitable for workloads that require very high I/O performance and can tolerate data loss if an instance is terminated.

This subject warrants an article of its own to cover each consideration in-depth. However, it is important to note that:

- When choosing the right EC2 storage type, consider factors such as performance requirements, data durability, access patterns, scalability, and cost constraints.

- Understand your workload’s characteristics

- Find options that balance reliability and performance with cost.

#8 Regularly review and optimize

AWS offers various techniques to ensure your EC2 instances efficiently balance resource usage and price, but this does not make EC2 sizing an easy one-time task. As your business grows, what constitutes the right balance today may be different from the right balance in the future. Regularly monitor, review, and reconfigure your environment to ensure continued cost-effectiveness.

AWS provides EC2 sizing recommendations in Cost Explorer to identify potential cost savings by downsizing or terminating instances. At the same time, Cost Optimization Hub helps to consolidate cost optimization recommendations across AWS accounts and AWS Regions.

In addition to this native tooling, third-party services such as CloudBolt allow businesses to go from “Cloud First” to “Cloud Right.” CloudBolt provides a unified platform for true cloud management, cost control, and advanced FinOps across all your cloud environments alongside AWS. You get a single pane of glass view of everything cloud FinOps related.

Featuring guest presenter Tracy Woo, Principal Analyst at Forrester Research

Conclusion

AWS EC2 sizing requires careful planning and ongoing cloud infrastructure monitoring. ’Right-sized’ is not a static state. The optimal balance between the required resources and their costs changes over time. As your business requirements, user demands, and AWS services change, what is right today may not be right tomorrow.

AWS offers a variety of native tools, services, and techniques to help keep your EC2 infrastructure cost-effective. However, no single offering provides a one-stop solution. To achieve cost-effectiveness, you must utilize all available tooling, which is often challenging.

CloudBolt can help achieve operational efficiency and reduce cost overruns by proactively analyzing resource performance against cloud expenditure. The platform assists in eliminating unused resources, establishing power schedules, rightsizing resources, and executing other cost-reducing activities at scale.

Related Blogs

The New FinOps Paradigm: Maximizing Cloud ROI

Featuring guest presenter Tracy Woo, Principal Analyst at Forrester Research In a world where 98% of enterprises are embracing FinOps,…